“US Officials Claim China Suspected in Violation of FBI Surveillance System”

We independently review everything we recommend. When you buy through our links, we may earn a commission which is paid directly to our Australia-based writers, editors, and support staff. Thank you for your support!

Brief Overview

- The FBI is probing a cyber breach within its internal system, thought to be associated with China.

- This breach pertains to an unclassified network that contains sensitive data regarding domestic surveillance.

- US entities, encompassing the White House, NSA, and CISA, are partaking in the investigation.

- The inquiry is in its preliminary phase, lacking detailed data on the extent of the breach.

Suspicion of Chinese Cyber Breach on FBI Network

In a notable cybersecurity event, US officials are of the opinion that hackers connected to the Chinese government have compromised an internal computer network of the Federal Bureau of Investigation (FBI). This network is believed to contain sensitive data related to domestic surveillance directives.

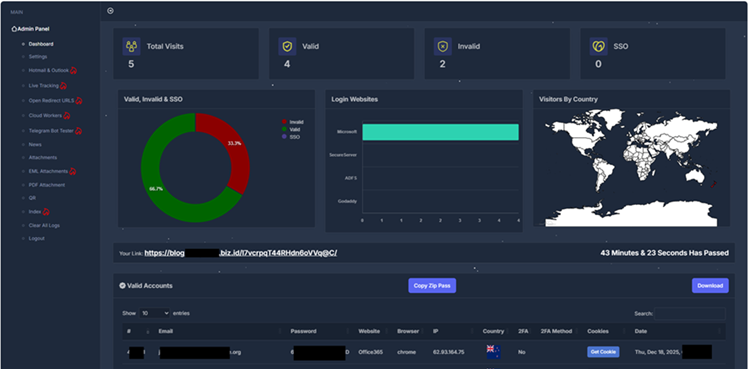

Breach Insights

This breach involves an unclassified system of the FBI, which holds data on communications of individuals currently under investigation. The FBI pointed out the advanced tactics employed by the hackers and has begun remediation efforts in tandem with ongoing forensic examinations.

Joint Investigation Initiatives

<p.Multiple US entities, including the White House, National Security Agency (NSA), and Cybersecurity and Infrastructure Security Agency (CISA), are actively participating in the investigation. An official from the White House confirmed that discussions on cyber threats are held regularly, although details concerning this incident remain unreleased.

Conclusion

The suspected infiltration of an FBI network by hackers with alleged Chinese ties illustrates the ongoing risk of cyber intrusions jeopardizing national security frameworks. As probes continue, the cooperation among US security agencies emphasizes the urgency of protecting sensitive data.